What Does Your Content Say About Your Company Culture?

Marketing Insider Group

DECEMBER 9, 2020

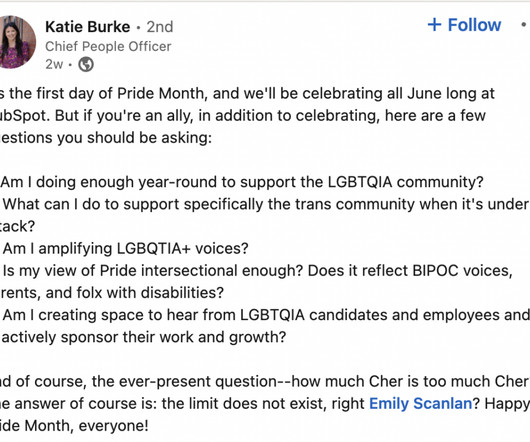

It’s more important than ever before to build a positive and inspiring company culture. The culture of your organization affects the talent you attract, how engaged your employees are at work, and also the customers who choose your brand over others. Your company culture is a reflection of your core brand values and mission.

Let's personalize your content